79% of Lawyers Use AI.

10% of Firms Have Policies.

The governance gap is a liability. We build the policies, frameworks, and compliance documentation your organization needs to use AI responsibly. Practical documents designed to be followed, not shelf-ware for auditors.

Of Orgs Have AI Policies

EU AI Act Full Effect

Governance Framework

Data Classification

What You Get

Governance Documents That Actually Get Used

Every deliverable is tailored to your regulatory environment, practice areas, and existing technology stack. No boilerplate templates.

Acceptable Use Policy

Which tools are approved, which are prohibited, and under what conditions. Covers approved models, providers, personal vs. corporate accounts, and prohibited data categories. Written for enforcement, not aspiration.

Data Classification Framework

Three-tier classification for AI tool usage: prohibited (client PII, privileged communications, litigation hold), restricted (internal code, proprietary logic), and general (open-source, public documentation). Clear rules for each tier.

Human Oversight Requirements

Defined approval workflows for AI-generated work. No auto-approve for shell execution or file modification. Mandatory code review before commits. Pair programming model for work touching client data.

Regulatory Compliance Alignment

Mapping your AI governance to ABA Model Rules 1.1 and 1.6, EU AI Act requirements, NIST AI RMF, and relevant state regulations. SOC 2 and HIPAA alignment where applicable.

Incident Response Procedures

Reporting procedures for suspected data exposure, prompt injection attempts, and unauthorized AI tool usage. Aligned with your existing breach notification and client notification requirements.

Shadow AI Risk Assessment

Audit of what AI tools your people are already using, on what data, through which accounts. Quantified risk report with specific remediation actions and a migration path to governed alternatives.

The Framework

Five Layers of AI Governance

Effective AI governance is not a single policy document. It is a layered framework where each level constrains and informs the next. Our governance work builds on the five-layer model published in Agentic AI in Law and Finance.

We start at the top, where your organization has the most control, and work down to ensure alignment with regulatory requirements you cannot change. The result is a governance structure that holds up under scrutiny.

Voluntary frameworks

Internal policies, industry standards, and best practices your organization adopts by choice. This is where acceptable use policies, data classification, and oversight requirements live.

AI-specific regulation

EU AI Act (full applicability August 2026), Colorado AI Act (June 2026), NIST AI RMF, and OWASP Agentic Top 10. The regulatory window is narrowing. Formal AI policies are moving from best practice to compliance obligation.

Professional obligations

ABA Model Rule 1.1 (Competence): lawyers must understand the risks of AI tools they use. Rule 1.6 (Confidentiality): reasonable measures to prevent unauthorized disclosure. These are not optional.

Why Now

The Regulatory Window Is Closing

The EU AI Act becomes fully applicable in August 2026. The Colorado AI Act takes effect in June 2026. NIST published its preliminary Cybersecurity Framework Profile for AI in December 2025. The days of treating AI governance as optional are ending.

Research shows 85%+ prompt injection success rates against some popular coding tools. Vendor security varies dramatically: Claude Code rates "Low" for prompt injection vulnerability, while others rate "Critical" or "High." Your governance framework needs to account for these differences.

Shadow AI is a liability

Teams using personal AI accounts create unaudited channels for client data. Without governance, every personal ChatGPT conversation is a potential confidentiality breach.

Professional responsibility requires it

ABA Model Rule 1.1 creates an affirmative duty to understand the risks of technology you use. "We didn't have a policy" is not a defense.

Governance enables adoption

Clear policies remove the ambiguity that slows adoption. Teams move faster when they know exactly what is permitted, what is restricted, and what is prohibited.

Complete Coverage

Governance Works Best With Infrastructure and Training

Policies need technical enforcement and team adoption to be effective. We offer all three.

Enforce your governance policies with enterprise cloud deployment, SSO, audit logging, and network isolation. See AI Infrastructure →

Train your teams to use Claude Code, Codex CLI, and other agentic tools within your governance framework. See Agentic Enablement →

Build broad AI literacy across your organization with structured programs grounded in real legal scenarios. See AI Training →

Why 273 Ventures

Governance Grounded in Published Research

Published governance frameworks

Our Agentic AI in Law and Finance textbook defines the GPA Framework and ten design questions for governing agentic systems. We wrote the frameworks we implement. See also: Deploying Claude Code Safely in Your Law Firm.

Legal and technical depth

We understand ABA Model Rules, EU AI Act obligations, and SOC 2 requirements. We also understand prompt injection attack vectors, sandbox architectures, and VPC networking. Both sides inform our governance work.

Practical, not theoretical

We build and operate AI products (Kelvin Agentic OS, Kelvin Intelligence). Our governance recommendations come from running production AI systems in regulated environments, not from reading compliance checklists.

Get Started

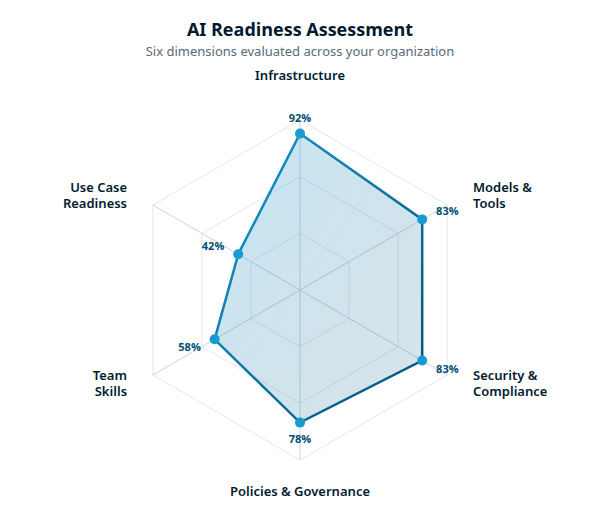

Start With a Governance Assessment

We audit your current AI policies (or lack of them), identify gaps, and deliver a governance package tailored to your regulatory environment. Most assessments take two weeks.

Stay ahead of AI in professional services.

Industry insights, market shifts, and what we're building — delivered monthly.

We won't send you spam. Unsubscribe at any time.