· advisory · 9 min read

Deploying Claude Code Safely in Your Law Firm

Your developers are already using AI coding tools on personal accounts. Here is how to deploy Claude Code through enterprise cloud infrastructure with the controls law firms actually need.

Michael Bommarito

CEO, 273 Ventures

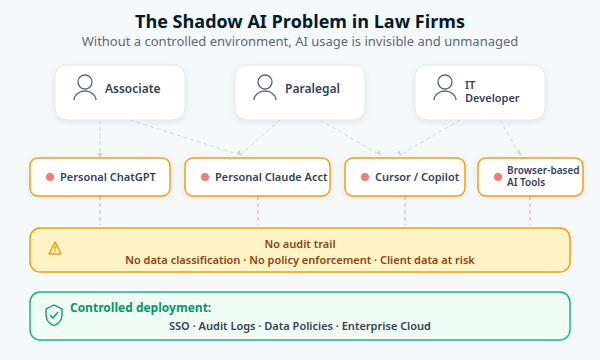

The Problem Is Already on Your Network

Industry surveys consistently show two things at once: lawyers are already using AI tools in meaningful numbers, and formal firm-wide governance still lags behind adoption. That gap should concern every managing partner and CIO reading this.

Your associates and developers are not waiting for permission. They are signing up for personal Claude accounts, installing Cursor, pasting client code into ChatGPT, and running AI-assisted coding tools with their own API keys. Those interactions can move sensitive material outside firm-controlled identity, logging, and retention controls with little or no internal visibility.

This is shadow AI. It is already happening at your firm. The question is whether you formalize it with proper controls or pretend it is not there.

What Claude Code Actually Does

Claude Code is Anthropic’s agentic coding assistant. It runs in a developer’s terminal, reads and writes files, executes shell commands, and coordinates multi-step coding tasks through natural language. A single request like “refactor the billing module” might involve reading dozens of files, planning changes, editing code across multiple files, running tests, and committing the result.

Each of those steps makes API calls to a Claude model. A typical coding session generates 5-15 API calls per minute during active use. That volume of interaction is why the deployment model matters so much: every call is an opportunity for client data to leave your network if controls are not in place.

Claude Code reached general availability in May 2025 and quickly moved from early adopter tooling into mainstream enterprise evaluation and rollout. This is not an experiment. It is production infrastructure for software teams.

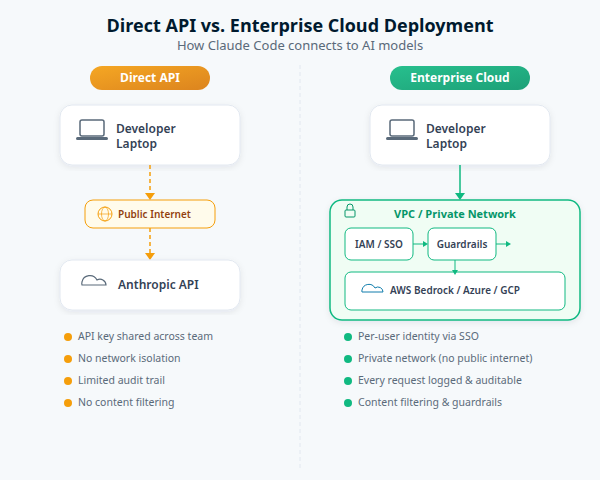

Two Deployment Models: Direct API vs. Enterprise Cloud

There are fundamentally two ways to run Claude Code.

The direct API approach is fast to set up. A developer gets an Anthropic API key, sets an environment variable, and starts coding. Five minutes from decision to first use. But that simplicity comes with trade-offs that are unacceptable in a regulated environment: shared API keys, no per-user identity, traffic over the public internet, limited audit logging, and a separate billing relationship with Anthropic that is invisible to your IT department.

The enterprise cloud approach routes Claude Code through enterprise-managed provider integrations such as AWS Bedrock, Google Cloud Vertex AI, or Microsoft Foundry. That gives you a path to cloud-native identity, centralized billing, audit logging, and private networking controls. Those controls still need to be configured correctly, but the deployment model is much closer to what regulated environments need.

The table below summarizes the differences that matter most for law firms:

| Concern | Direct API | Enterprise Cloud |

|---|---|---|

| Authentication | Usually API keys | Can integrate with cloud IAM / SSO patterns |

| Network path | Usually public internet | Can be routed through private networking controls |

| Audit trail | Limited firm-side visibility | Stronger cloud-native audit options |

| Data residency | Provider-managed | Better regional and provider control |

| Content filtering | Limited | Guardrails may be available depending on provider |

| Compliance posture | Depends on vendor plan | Depends on the cloud service and configured controls |

| Cost visibility | Separate vendor billing | Centralized cloud billing and attribution options |

For a law firm handling client matters, the enterprise cloud path is the only responsible choice. The direct API is fine for individual experimentation. It is not fine for anything touching client work.

Get Started

Need Help Setting Up Enterprise AI Infrastructure?

We configure secure deployments including Claude Code, Codex, and other platforms on AWS Bedrock, Google Cloud Vertex AI, and Microsoft Foundry for law firms. Identity integration, network controls, governance policies, and team training included.

The Security Model That Makes This Work

Claude Code’s built-in security goes deeper than most firms realize.

Sandboxing. Claude Code uses operating-system-level sandboxing rather than relying only on application prompts. Anthropic documents Apple Seatbelt on macOS and a Linux sandboxing architecture that constrains filesystem and process behavior at the OS layer. These are not just UX permission dialogs. They are intended to create kernel-enforced boundaries around what the tool and its subprocesses can do.

Permission model. Claude Code is safer when firms require human confirmation before shell commands or sensitive actions. Anthropic also exposes less restrictive modes, so this is something you should enforce through policy, training, and deployment standards rather than assume from the product default alone.

Prompt injection resistance. A January 2026 academic study tested prompt injection attacks across major AI coding tools. Claude Code received the lowest vulnerability rating (“Low”) due to its mandatory confirmation requirements and sandboxed MCP servers. Other tools rated “Critical” or “High.” When your developers clone an unfamiliar repository, the code comments, configuration files, and documentation in that repo could contain hidden instructions designed to hijack the AI agent. Claude Code’s architecture provides the strongest defense against this attack vector.

Network isolation. When deployed through AWS Bedrock, Google Cloud Vertex AI, or Microsoft Foundry, you can pair the model integration with VPC endpoints, Private Service Connect, or private endpoints and strict egress rules. That does not happen automatically. It has to be designed and configured. But when it is, firms can reduce data movement over the public internet and sharply narrow where agent traffic is allowed to go.

What Your Acceptable Use Policy Needs

Technology controls are necessary but not sufficient. Every law firm deploying agentic AI tools needs a written policy that addresses these areas.

In our book Agentic AI in Law and Finance, we present a five-layer governance stack for agentic systems: foundational law, professional obligations, sector-specific regulation, AI-specific regulation, and voluntary frameworks. No single layer covers everything. An acceptable use policy for Claude Code sits at the intersection of several: professional duty (ABA Model Rules), data protection law (state and federal), and voluntary standards (SOC 2, NIST AI RMF). Getting this right requires understanding how those layers interact.

Approved tools and providers. Name the specific tools that are authorized and the specific cloud provider through which they must be accessed. “Claude Code via AWS Bedrock” is a policy. “AI tools are available” is not.

Data classification tiers. Not all code and data carry the same risk. Client PII, privileged communications, and litigation hold materials should never be processed through AI tools without specific DLP controls and zero-data-retention guarantees. Internal tooling code and open-source contributions carry lower risk. Your policy should define at least three classification tiers with clear handling rules for each.

Human oversight requirements. Claude Code is an agentic system: it perceives its environment (your codebase), pursues goals (your instructions), and takes actions (file edits, shell commands). Our GPA Framework (Goal, Perception, Action) provides a structured way to reason about what oversight each of those dimensions requires. At minimum, all AI-generated code should be reviewed by a qualified developer before being committed. Agentic tools should operate as pair programmers, not autonomous agents, for work that touches client systems. Auto-approve modes should be prohibited.

ABA Model Rules considerations. Rule 1.1 (Competence) requires attorneys to understand the technology they use. Rule 1.6 (Confidentiality) requires reasonable measures to prevent unauthorized disclosure of client information. An agentic tool that sends client code to a third-party API without encryption, audit logging, or data retention controls likely fails both obligations. We cover these professional duty implications in depth in Chapter 3 of Agentic AI in Law and Finance.

A Realistic Timeline

Getting from “we should do something about AI” to “our team is using Claude Code safely in production” does not require a six-month IT project.

Weeks 1-2: Assessment. Audit what tools people are already using. Evaluate your cloud infrastructure readiness. Identify the gaps in policy, security, and skills. Produce a prioritized roadmap.

Weeks 3-4: Secure foundation. Configure enterprise cloud access, integrate with your identity provider, set up audit logging and cost controls, and draft acceptable use policies. The technical setup itself takes days, not weeks. The governance work takes the remaining time.

Week 5+: Training and rollout. Start with a pilot group. Run guided workshops where developers work with Claude Code on real problems from their own codebase. Build internal champions who can sustain adoption across the firm.

The cost is usually manageable, but you should not promise a fixed number without measuring your own workloads. Actual spend depends heavily on model selection, prompt volume, caching behavior, and how aggressively teams use the tool during active coding sessions. The right way to budget is to run a pilot, measure token consumption on representative matters and repositories, and then set model and approval policies from real usage data.

What We See Firms Getting Wrong

Three patterns appear repeatedly in the firms we advise.

Waiting for perfection. Some firms delay any AI deployment until they have a comprehensive policy, full security review, and executive alignment. By the time that process completes, their developers have been using uncontrolled personal accounts for months. A controlled pilot with basic policies is safer than no policy at all.

Treating all tools equally. The security posture of AI coding tools varies dramatically. Claude Code’s mandatory confirmation and kernel-level sandboxing are fundamentally different from tools that offer auto-approve modes and unsandboxed plugin systems. Your evaluation should weight security architecture heavily, not just feature lists.

Underestimating the governance work. The cloud configuration is the easy part. Writing practical acceptable use policies, defining data classification tiers, building training programs, and establishing review processes for AI-generated code takes more effort. It is also the work that actually protects the firm.

Getting Started

We built our Agentic Enablement program to handle exactly this problem. The engagement starts with a two-week assessment: we audit your current AI tool usage, evaluate your infrastructure, and produce a scored readiness report with a prioritized action plan.

From there, we build the secure foundation (cloud deployment, identity integration, governance documents) and train your teams through guided workshops using our proprietary Kelvin Simulator. The goal is not just deployment but adoption: teams that use the tools effectively and safely.

For the governance foundations behind this work, our book Agentic AI in Law and Finance is available free under CC BY 4.0. It covers the GPA Framework, ten design questions for agentic systems, and a complete governance model for regulated industries. The Amazon paperback is also available.

If your firm is ready to move past uncontrolled AI usage, start with an assessment.